Qualification of a non-deterministic AI tool under ISO 26262 should start in one place: ISO 26262-8, Clause 11. That is the clause for confidence in the use of software tools. Everything else is supporting structure.

It is tempting to treat AI tool qualification as mainly a validation problem: define the AI use case, create an evaluation set, run the tests, write the report. That is necessary, but it is not enough.

A Tool Confidence Level, or TCL, expresses how much confidence ISO 26262 expects in a tool: TCL1 is the lowest confidence concern, TCL2 is intermediate, and TCL3 is the highest and therefore needs the strongest qualification evidence.

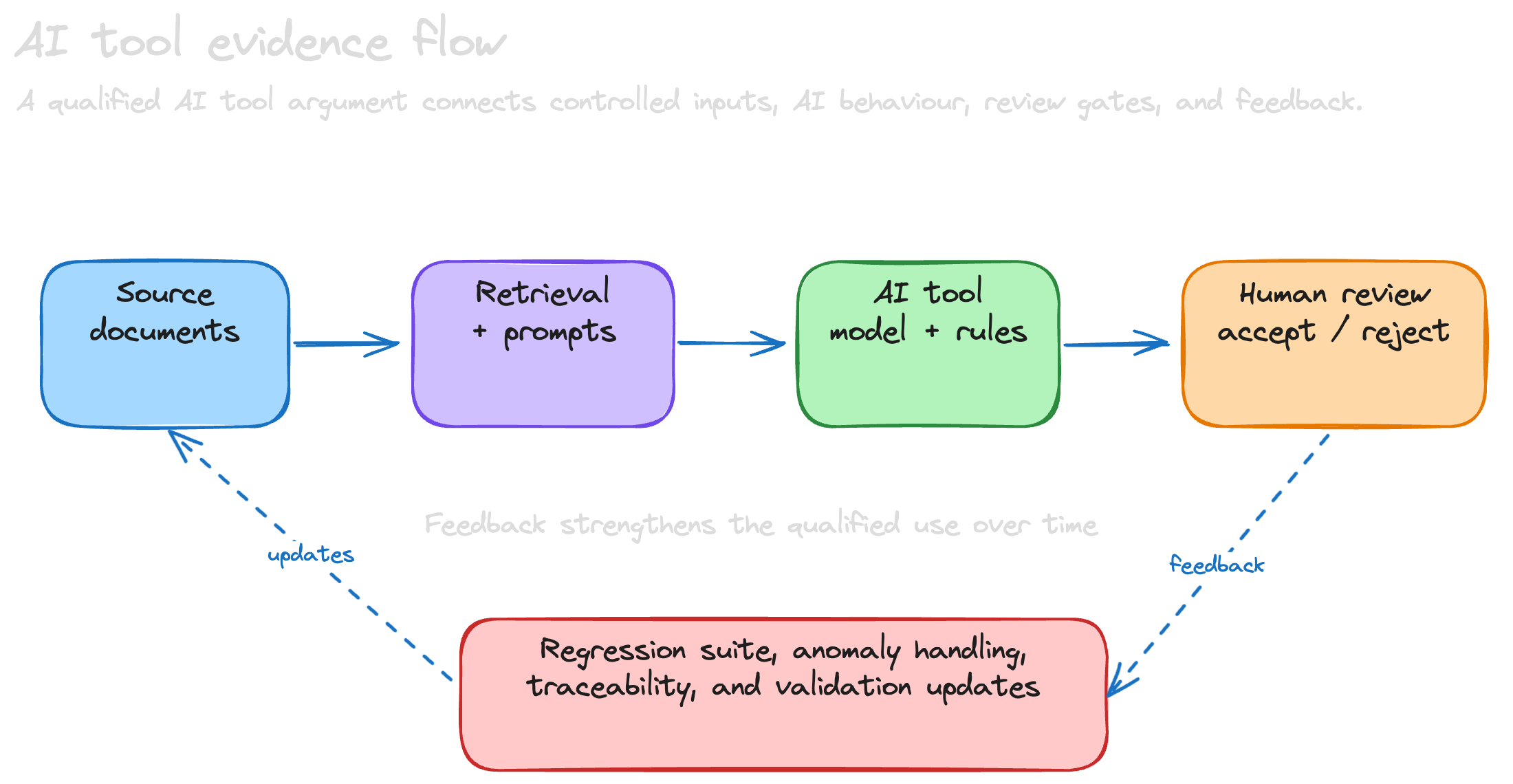

For non-deterministic AI tools, the central problem is not just whether the software runs without crashing. It is whether the qualified use remains controlled when outputs can vary with model behavior, prompts, retrieval context, source documents, sampling settings, or vendor-side model changes.

For a TCL3 AI tool, ISO 26262 also allows an argument based on the way the tool was developed. This is the interesting route for non-deterministic AI-assisted safety tools: requirements reviewers, compliance evaluators, safety-case assistants, evidence checkers, traceability assistants, and similar systems where a black-box validation suite alone will rarely tell the full story.

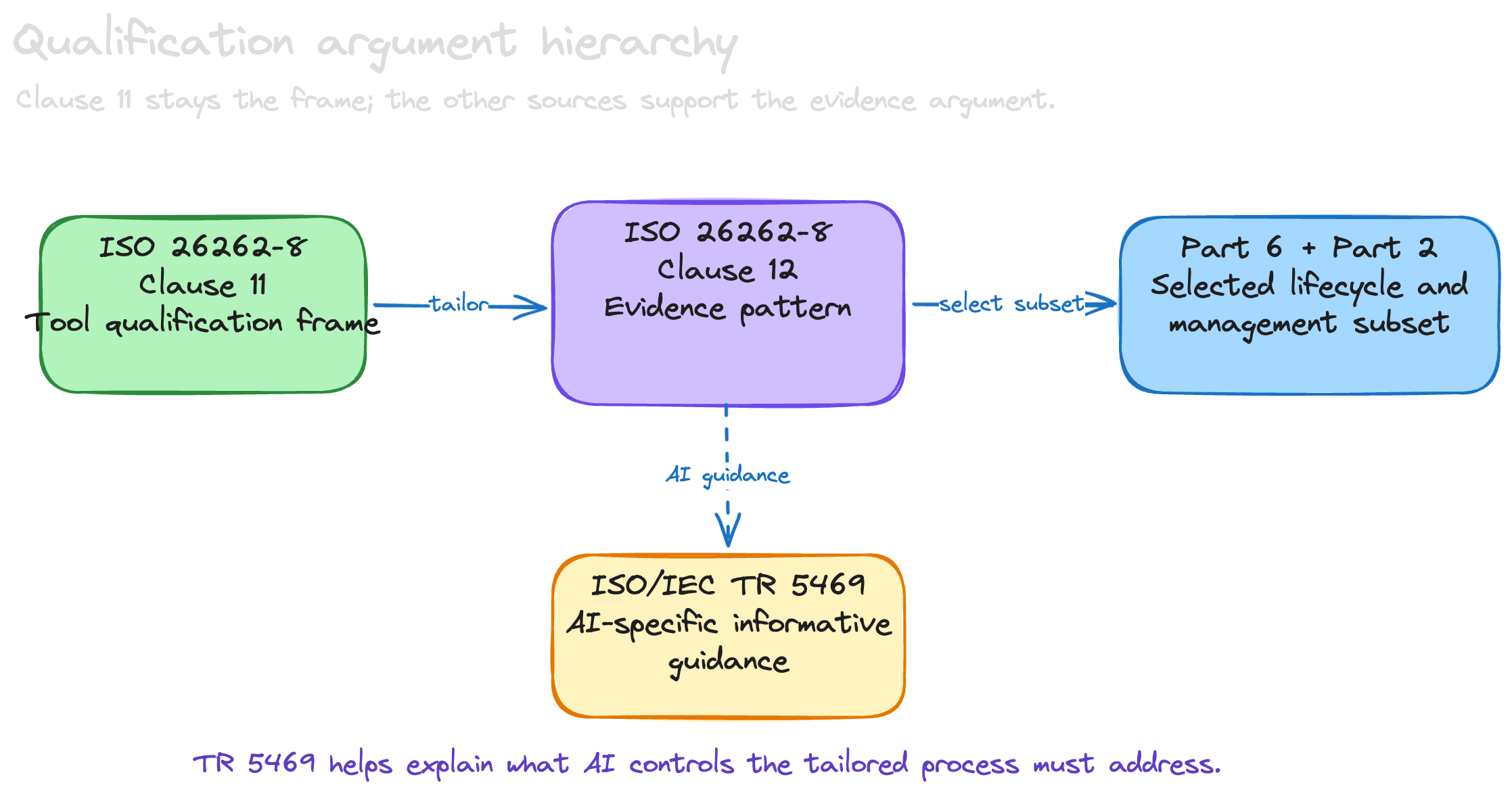

For the AI-specific parts of that argument, ISO/IEC TR 5469:2024 is useful supporting material. It does not replace ISO 26262, but it helps name the issues that a tailored safety-standard process has to control: probabilistic behaviour, data and concept drift, explainability, adversarial inputs, monitoring, and lifecycle evidence for AI systems.

The question is simple:

If Clause 11 is the tool qualification frame, how do we construct a TCL3 argument that a non-deterministic AI tool was developed according to a safety standard?

My answer: use Clause 11 as the legal and technical frame, then use Clause 12 as a tailoring guide. Clause 12 is not the tool qualification route, but its logic is useful: identify the AI tool being qualified, define intended use, show requirements compliance, show suitability for that intended use, control configuration, and verify the validity of the result. That pattern can be used to tailor ISO 26262 Part 6 — and, where needed, Part 2 — into a concrete development framework for non-deterministic AI tooling.

Start with the right clause: Part 8 Clause 11

The top-level compliance frame for a non-deterministic AI tool is not Part 6 and it is not Part 8 Clause 12. It is ISO 26262-8, Clause 11: confidence in the use of software tools.

Clause 11 is concerned with a specific risk: a tool malfunction produces an erroneous output, fails to detect an error, or presents a non-deterministic AI answer with more confidence than the evidence supports, and the development process then relies on that output.

If that output is not sufficiently checked in the next process step, the tool needs a confidence argument. Depending on the tool impact, error detection measures, and ASIL context, that can lead to TCL3.

For TCL3, the qualification report needs to make clear:

- which AI tool version and configuration is qualified,

- which AI use cases are qualified,

- which execution environment is qualified,

- which AI outputs are safety-relevant,

- which erroneous or unsupported AI outputs were considered,

- which qualification method or combination of methods was selected, and

- why the evidence is valid for the intended project use.

That is the frame. Everything else supports that frame.

The useful route: evaluation of the tool development process

ISO 26262-8:11.4.8 provides the important opening. It addresses evaluation of the tool development process. In simple terms, if this method is selected, the development process used for the tool has to comply with an appropriate standard, and the evaluation has to provide evidence that a suitable software development process was applied.

There is also a very important practical note in the tool qualification method tables: no safety standard is fully applicable to the development of software tools; instead, a relevant subset of requirements can be selected.

That sentence matters. It prevents the argument from becoming absurd.

A non-deterministic AI compliance evaluator is not an embedded automotive software item. It does not have a vehicle-level safe state. It does not have an HSI in the normal ECU sense. It does not have calibration data in the same way embedded software does. It also has AI-specific lifecycle elements: model selection, prompt control, retrieval control, evaluation datasets, guardrails, review workflow, and monitoring of non-deterministic output behavior. Applying Part 6 literally and completely would be performative compliance.

But applying a relevant subset of Part 6 and Part 2, adapted to the realities of non-deterministic AI, can be exactly the right thing to do.

Use Clause 12 to tailor Part 6 and Part 2 for AI tooling

Part 6 is the ISO 26262 software development part. For an AI tool, it provides lifecycle logic for systematic development: AI tool requirements, architecture, implementation, verification, integration, validation, and control of the development environment.

Part 2 is also relevant when the AI tool is developed as a serious safety-related product rather than an informal prompt-based prototype. It contributes the management framework: safety culture, competence, responsibilities, planning, configuration discipline, anomaly handling, confirmation measures, and assessment expectations.

Clause 12 gives a useful way to decide how much of Part 6 and Part 2 to bring across. In a strict sense, using Clause 12 this way is an analogy, not a direct application. If you want to call it a “misuse,” it is a controlled and explicit one: we are not claiming the tool is qualified under Clause 12. We are borrowing Clause 12’s evidence logic to build a practical development framework for the Clause 11 qualification method, specifically for tools whose outputs may vary across runs, model versions, prompts, retrieval context, or source corpora.

For AI tool development, the terminology changes, but the engineering intent maps well:

| Part 6 concept | AI tool development equivalent |

|---|---|

| Software safety requirements | AI tool requirements and safety-relevant requirements: what the AI tool must do correctly, what it must refuse or flag, and what evidence it must provide before its output can be relied upon. |

| Software architecture | AI tool architecture: ingestion, retrieval, prompt orchestration, model invocation, deterministic checks, generation, citation, report creation, review workflow, logging, guardrails, and fallback behavior. |

| Software unit design and implementation | Implementation of AI tool modules, prompts, schemas, retrieval pipelines, deterministic validators, review gates, and configuration-controlled model interfaces. |

| Software unit verification | Unit tests, static analysis, prompt/retrieval tests, boundary tests, metamorphic tests, regression tests, and golden-case evaluations for AI tool modules. |

| Software integration and verification | Verification that ingestion, retrieval, prompting, generation, deterministic checks, and human review work together across representative AI tool workflows. |

| Testing in target environment | Validation of the qualified AI tool package in the intended execution environment, with pinned model/configuration assumptions and representative qualified use cases. |

This is not a claim that Part 6 or Part 2 applies directly and completely. It is a claim that Part 6 provides the selected software lifecycle basis, Part 2 provides the selected safety-management basis, and Clause 12 provides the tailoring pattern for deciding what evidence is needed for the AI tool’s intended use.

Where Clause 12 fits

Part 8 Clause 12 is the qualification route for software components. It is not the tool qualification clause.

So yes: using Clause 12 as a guide for tool development is a bit of a bend. It is not a literal application of Clause 12, and it should not be presented as one.

But it is an acceptable and useful bend if it is made explicit. Clause 11 tells us that, for TCL3, one possible qualification route is to evaluate the tool development process and show development according to a safety standard. It also acknowledges the practical problem: no safety standard is fully applicable to software tools, so a relevant subset has to be selected. Clause 12 gives us a disciplined way to think about that selection.

In its normal role, Clause 12 asks whether a software component can be reused in an ISO 26262 context. It requires the component qualification argument to include, among other things, specification of the software component, evidence that the component complies with its requirements, evidence that it is suitable for its intended use, evidence that the development process for the component is based on an appropriate standard, and a qualification plan.

Those are exactly the questions we need when tailoring Part 6 and Part 2 for a non-deterministic AI tool. We are not saying “Clause 12 qualifies the tool.” We are saying “Clause 12 gives a useful evidence pattern for deciding which Part 6 and Part 2 requirements matter for this AI tool, in this use case, under Clause 11.” Clause 12 asks the right practical questions:

| Clause 12 evidence logic | Tool development framework implication |

|---|---|

| Identify the software component | Identify the exact AI tool, version, configuration, execution environment, model versions, prompts, retrieval index, source corpus, libraries, guardrails, and qualified package. |

| Define intended use | Define qualified AI use cases, input types, output types, users, assumptions, excluded use cases, human-review expectations, and downstream checks. |

| Show requirements compliance | Tailor Part 6 into AI tool requirements, architecture, implementation, prompt/retrieval controls, unit verification, integration, and validation evidence. |

| Show suitability for intended use | Build representative validation cases and show that the evidence is valid for the intended ISO 26262 activities. |

| Control configuration | Use Part 2-style management controls: responsibilities, competence, change control, anomaly handling, release discipline, and confirmation measures. |

| Verify the validity of qualification results | Review whether the qualification argument remains valid for the intended project context, model behavior, retrieval corpus, and TCL3 claim. |

So Clause 12 is useful in two different ways, and the distinction matters. First, in its normal role, it supports component-level evidence for reused software elements inside the AI tool. Second, by analogy, it provides an evidence pattern for tailoring Part 6 and Part 2 into an AI tool development framework. The first use is direct. The second use is interpretive, but defensible because it supports the Clause 11 development-process qualification route rather than replacing it.

Common candidates include:

- a document ingestion or OCR pipeline,

- a retrieval/indexing component,

- an embedding model,

- a non-deterministic LLM or other generative model,

- a prompt orchestration layer,

- a deterministic rules or requirements classifier used to check AI output,

- a report generator,

- a human-review workflow component, or

- third-party packages that influence the qualified AI output.

For these AI components, a Clause 12-style mini qualification can provide useful evidence: identify the component, define its intended use inside the AI tool, capture its configuration, define requirements, evaluate known limitations, verify it in context, and document the result.

This component view also connects back to the software lifecycle argument.

Part 6 itself also points in this direction. When a pre-existing software architectural element is used without modification to meet assigned safety requirements, and it was not developed according to ISO 26262, Part 6 refers to qualification under Part 8 Clause 12. It also makes clear that using qualified software components does not remove the need for later integration and software testing activities.

That is the right mental model for an AI tool as well: Clause 12 can support the component-level evidence, but the tool-level confidence argument still lives in Clause 11.

Once the argument is framed this way, the remaining question is not whether to use AI-specific guidance, but where to place it. This is where ISO/IEC TR 5469 fits naturally: not above ISO 26262, and not beside Clause 11 as a separate route, but underneath the tailoring rationale as evidence that the selected controls address the real properties of AI systems.

What ISO/IEC TR 5469 adds

ISO/IEC TR 5469:2024 is therefore useful here, but in a different way from ISO 26262. It is an informative technical report on artificial intelligence and functional safety, not a new normative qualification route for automotive tools. I would use it as supporting rationale for the AI-specific tailoring decisions inside the Clause 11 argument.

The value of TR 5469 is that it names the AI-specific issues that the ordinary ISO 26262 software lifecycle language does not make explicit enough on its own.

The relevant guidance includes AI lifecycle alignment and functional safety artefacts (TR-5469:11.2 and 11.4), verification and validation challenges for AI systems (TR-5469:9), non-predictable or probabilistic behaviour (TR-5469:9.2.4), data and concept drift (TR-5469:8.4.2.1, 8.4.2.2 and 9.2.5), explainability and transparency (TR-5469:8.3 and 9.6), adversarial and malicious inputs (TR-5469:8.5), and monitoring or incident feedback after deployment (TR-5469:9.5).

That makes TR 5469 a useful bridge between the abstract ISO 26262 tailoring argument and the concrete engineering controls needed for non-deterministic AI tooling. It supports requirements such as pinned model and prompt configurations, documented training/validation/test data relevance, retrieval-index control, representative evaluation sets, drift monitoring, human-review constraints, explainability expectations, and incident feedback into the validation suite.

Used carefully, the hierarchy becomes clean: Clause 11 is the tool qualification frame; Clause 12 is the evidence/tailoring pattern; Part 6 and Part 2 provide the selected safety-standard framework; and TR 5469 provides AI-specific guidance for what that tailored framework needs to address.

The argument I would make

I would not write:

“The AI tool is qualified according to Part 8 Clause 12.”

That is too easy to challenge. Clause 12 is not the tool qualification clause.

I would write:

“The AI tool is qualified according to ISO 26262-8:11. For the TCL3 qualification method ‘development in accordance with a safety standard’ / evaluation of the tool development process, ISO 26262-8:12 was used as a tailoring pattern to select and adapt relevant requirements from ISO 26262 Part 6 and Part 2. ISO/IEC TR 5469:2024 was used as informative AI-specific guidance for the tailoring rationale. Part 6 provides the AI software lifecycle evidence; Part 2 provides the safety-management evidence; Clause 12-style evidence is also used for reused and pre-existing AI/software components where applicable. The selected subset, tailoring rationale, and resulting evidence are documented in the AI tool qualification report.”

That argument is considerably more defensible because it respects the structure of the standard:

- Clause 11 remains the tool qualification route.

- Clause 12 is used consciously as a tailoring analogy, not as tool qualification authority.

- Part 6 provides the safety-standard AI/software lifecycle subset.

- Part 2 provides the safety-management subset.

- TR 5469 provides AI-specific supporting guidance, not normative replacement requirements.

- Clause 12 also supports reused component evidence.

- Tailoring is explicit, not hidden.

- The claim is limited to the qualified use cases and configuration.

Align early with the assessor and customer

There is one practical point that matters as much as the clause argument: align the tailoring strategy before the assessment becomes formal. ISO 26262 permits tailoring, but a Tier 1 customer, OEM, or TÜV assessor may still have a view on the minimum evidence expected for a TCL3 tool developed according to a safety standard.

The qualification plan should therefore make the tailoring visible early: which Part 6 requirements are selected, which are adapted, which are out of scope, which Clause 12-style component arguments are used, and which validation activities close the remaining confidence gap. If that structure is reviewed only at the end, the team may discover too late that the assessor expected more explicit traceability, more independence in verification, or a stronger validation set.

If an assessor challenges the Clause 12 analogy, do not defend it as a new qualification route. Bring the discussion back to Clause 11: the selected method is evaluation of the tool development process, Clause 11 permits a relevant subset of a safety standard, and Clause 12 is only the documented rationale for how that subset was selected and justified.

What the dossier should contain

If I were preparing this argument for assessment, I would expect at least the following work products. They are not all equally difficult.

In practice, qualification arguments most often break down at the interfaces: weak intended-use definition, unclear tailoring rationale, missing traceability from tool requirements to verification, insufficient validation data for realistic project use, or reused components whose limitations are not carried into the tool-level safety argument.

Foundation — items 1–3

1. AI tool criteria evaluation report

This is the Clause 11 front door: tool impact, error detection, TCL determination, intended use, inputs, expected outputs, environment, constraints, and qualified configuration.

2. Tool qualification plan

This explains why the selected qualification methods are sufficient. For the development-process route, it identifies the chosen safety standard subset and the rationale for tailoring, so the whole argument is coherent before the evidence is judged.

This is typically one of the most effort-intensive work products.

3. AI tool safety management framework

This is the Part 2 contribution. Define responsibilities, required AI and safety competence, review and approval flow, configuration/change management, anomaly handling, release criteria, independence expectations, and how confirmation measures will be performed for the AI tool qualification evidence.

Evidence — items 4–9

4. Safety-standard applicability matrix

Map selected ISO 26262 Part 6 and Part 2 requirements to tool development evidence. Mark each requirement as applicable, adapted, not applicable, or covered by another activity. For every exclusion, give the rationale. Do not bury this in prose. Assessors need to see the map.

This is typically one of the most effort-intensive work products.

5. AI tool requirements specification

This is another common failure point. Define functional requirements, safety-relevant AI tool requirements, interface requirements, constraints, expected outputs, error handling, logging, and misuse cases. Include retrieval boundaries, source citation expectations, model/version constraints, prompt constraints, confidence handling, non-determinism assumptions, and human-review assumptions.

6. AI tool architecture and safety mechanisms

Show the internal structure. Identify where erroneous, unsupported, or non-reproducible AI outputs can be introduced and where they are prevented or detected. Examples include schema validation, deterministic checks, source traceability, locked prompts, model/version pinning, retrieval-index pinning, regression suites, review gates, audit logs, output diffing, and confidence thresholds.

7. Verification evidence

Provide review records, static analysis, unit tests, integration tests, regression tests, requirements coverage, anomaly reports, and evidence that failures are corrected or controlled.

8. AI tool validation evidence

Even if the main claim is “developed according to a safety standard,” validation is still the evidence that the AI tool actually works for the qualified purpose. ISO 26262-8:11.4.9 expects validation measures to show that the tool complies with its specified purpose and that malfunctions and erroneous outputs are analysed. For TCL3, the validation set needs to be representative of the qualified use cases, not merely convenient examples.

This is typically one of the most effort-intensive work products.

9. AI component qualification evidence

For reused internal components, provide Clause 12-style evidence: component identification, intended use, requirements, configuration, prior verification, known anomalies, suitability argument, and integration constraints. The weak spot is usually not naming the component; it is proving that the component is suitable in this specific tool context and that its limitations are controlled by integration tests, wrappers, monitors, review gates, or usage restrictions.

This is typically one of the most effort-intensive work products.

Closure — items 10–11

10. AI-specific TR 5469 tailoring note

Add a short note mapping the selected AI-specific controls to ISO/IEC TR 5469 topics: lifecycle artefacts, data relevance, V&V limitations, drift, explainability, adversarial inputs, monitoring, and incident feedback. For example, link lifecycle and artefact expectations to TR-5469:11.2 and 11.4, and link V&V, probabilistic behaviour, drift, explainability, and monitoring to TR-5469:9, 9.2.4, 9.2.5, 9.5 and 9.6. Keep it clearly informative: TR 5469 supports the rationale, while ISO 26262-8:11 remains the qualification frame.

11. Qualification report

The final report ties it all together: qualified version, qualified use cases, TCL, selected methods, safety-standard subset, evidence summary, validation result, limitations, residual risks, and conditions of use.

The most common mistake

The common mistake is to argue too much.

Do not claim the AI tool is “ISO 26262 compliant” in a generic sense. That phrase creates more problems than it solves. AI tools are qualified for specific use cases, configurations, environments, model/prompt/retrieval assumptions, and human-review assumptions. The qualification is not universal.

A better claim is narrower:

Qualified claim:

“This AI tool version, in this configuration, with these model/prompt/retrieval assumptions, for these use cases, in this environment, has been qualified under ISO 26262-8:11 using development according to a selected safety-standard framework tailored from Part 6 and Part 2, guided by Clause 12 evidence logic, and supported by validation and component-level evidence.”

That is precise. It is auditable. It gives the assessor something concrete to evaluate.

What this means for non-deterministic AI safety tools

For non-deterministic AI ISO 26262 tools, this structure is essential. Pure output validation is necessary, but it is not sufficient. You also need to show that the tool development process controls the failure modes that matter:

Process-level failures:

- unsupported clause interpretation,

- missing retrieval evidence,

- hallucinated requirements,

- wrong ASIL propagation,

- lost traceability,

- incorrect document ingestion, and

- review workflows that allow unchecked AI output into the safety case.

AI-specific failures:

- non-reproducible model behavior,

- silent model or prompt changes,

- retrieval index drift over time,

- data drift and concept drift between the validation corpus and actual project inputs,

- confidence calibration failures where output appears authoritative but is not supported by the cited evidence, and

- weak explainability where reviewers cannot see why an answer was produced.

A Part 6-style development lifecycle helps because it forces the team to specify, design, implement, verify, integrate, and validate the AI tool systematically. Clause 12-style evidence helps because modern AI tools are rarely built from scratch. Clause 11 ties both back to the only question that matters: can the user rely on this AI tool for the qualified ISO 26262 activity?

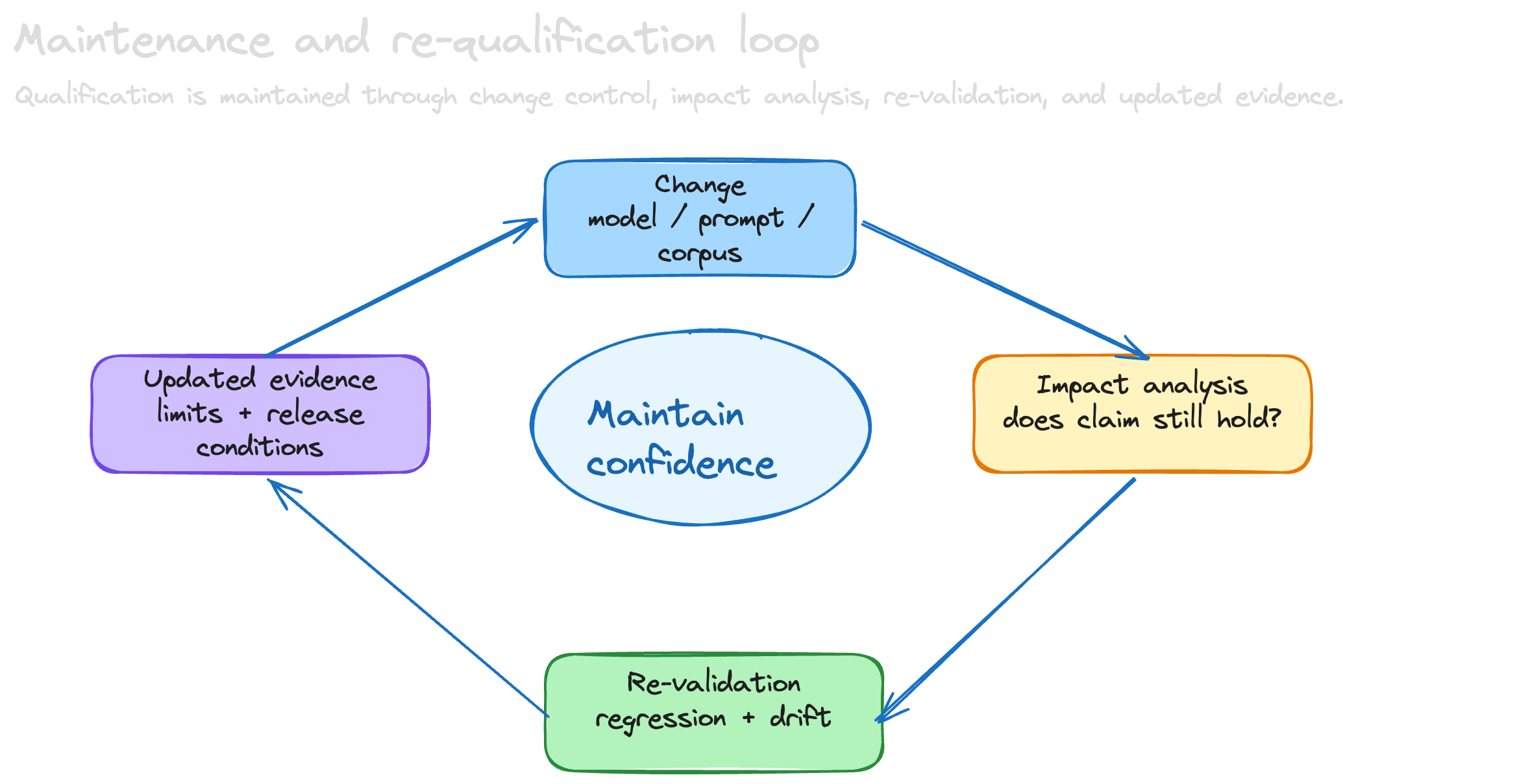

Maintenance and re-qualification triggers

Qualification is not a one-time badge. The qualification argument has to define what changes require impact analysis, partial re-verification, or full re-qualification. For non-deterministic AI tools, this includes changes to the qualified tool version, execution environment, configuration, safety-relevant dependencies, ingestion pipeline, retrieval pipeline, prompts, models, guardrails, review workflow, and validation assumptions.

Toolchain versioning needs special attention when the underlying LLM is provided through an external API. If the vendor can update model behavior silently, or without a version signal that is meaningful for qualification, the qualified configuration should include compensating controls such as pinned model identifiers where available, vendor change notifications, regression monitoring, periodic re-validation, or contractual/API constraints on model updates.

Trigger list

For non-deterministic AI tools, the trigger list needs to be even more explicit: model changes, prompt changes, retrieval-index changes, embedding model changes, source corpus changes, output schema changes, guardrail changes, and workflow changes that affect human review can all invalidate parts of the previous evidence. The practical rule is simple: if a change can alter a safety-relevant output or the confidence argument for that output, it needs controlled impact analysis before the qualified use claim is preserved.

TR 5469 also strengthens the maintenance argument: drift monitoring, incident feedback, and updates to validation scenarios are not operational nice-to-haves; they are part of keeping confidence in an AI system over time.

Ownership should be explicit: the tool developer usually owns the generic impact analysis for changes to the qualified tool package, while the tool user owns the project-specific decision that the changed tool is still valid for the intended use. For AI tools, both sides need to cooperate because model, prompt, corpus, and workflow changes can sit on either side of that boundary.

Bottom line

Developing a non-deterministic AI tool according to a safety standard is a viable TCL3 qualification strategy. But the argument should be made carefully:

- Use ISO 26262-8:11 as the tool qualification frame.

- Use ISO 26262-8:12 in two clearly separated roles:

- (interpretive) Tailoring pattern: use Clause 12 as the explicit evidence pattern for deciding how to tailor Part 6 and Part 2, not as the tool qualification clause.

- (direct) Component qualification: use Clause 12 as component-level evidence for reused or pre-existing AI/software components inside the tool.

- Use ISO 26262 Part 6 as the selected AI/software development lifecycle subset.

- Use ISO 26262 Part 2 as the selected safety-management subset.

- Use ISO/IEC TR 5469:2024 as informative AI-specific guidance for V&V, drift, explainability, monitoring, and lifecycle artefacts.

- Document the tailoring explicitly.

- Support the process claim with validation evidence.

- Limit the qualification claim to the specific AI tool version, configuration, model/prompt/retrieval assumptions, environment, and use cases.

If you do that, the argument is not “we used ISO 26262 words while building a tool.” It is a real safety-standard development argument for non-deterministic AI tooling.

Scoped, tailored, traceable, reviewable, and maintained under change control.